Spatial data pipelines have a habit of being embarrassingly parallel. You have a million coordinate pairs. You need to convert each one to an H3 cell index, look up some attribute in a database, and write the enriched record somewhere. Each record is independent. In Python this is a loop, and loops are slow. In Go, this is an invitation to use goroutines.

This post walks through a production-ready pattern for parallel spatial processing in Go: the fan-out/fan-in pipeline. It is the same pattern used to build high-throughput geo-enrichment services, and it is worth understanding from first principles.

The Problem: Sequential Geo-Processing Is A Bottleneck

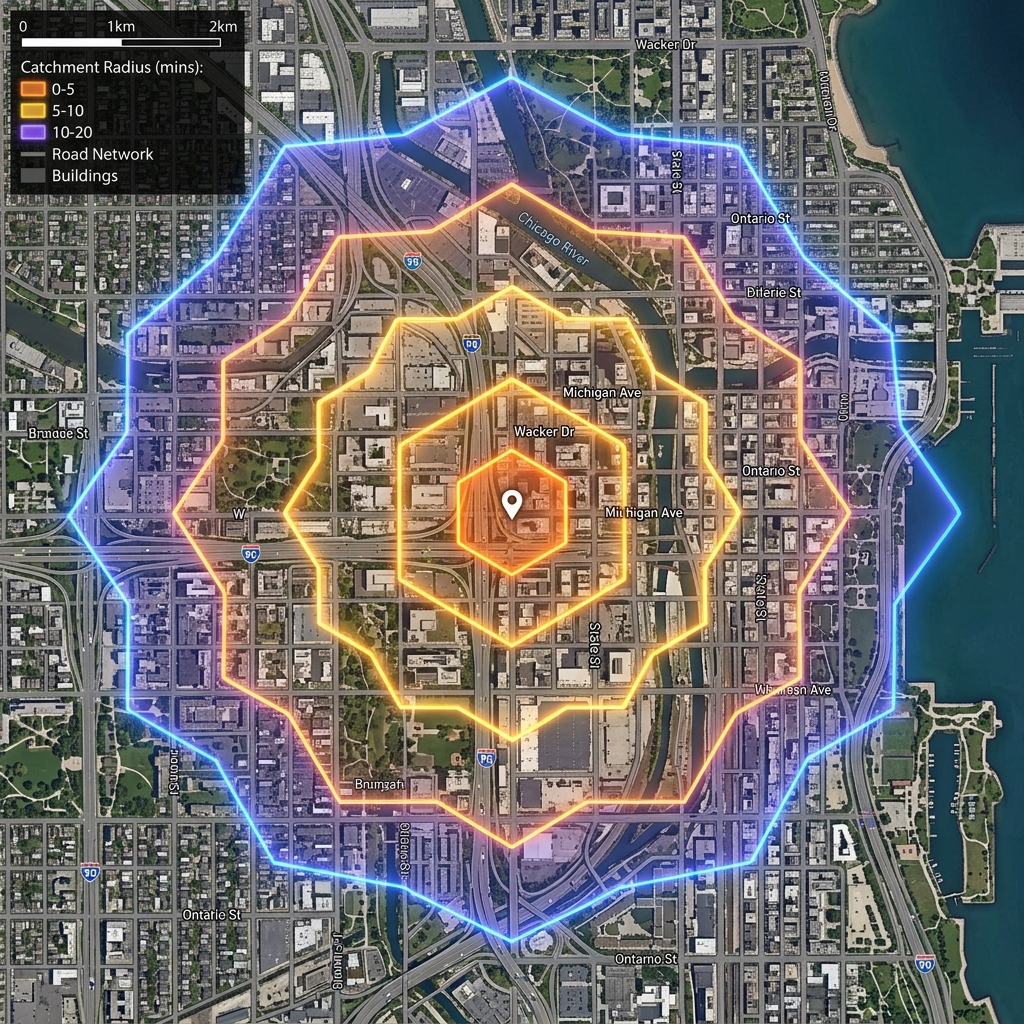

Consider a common task: you have a CSV of GPS points and you need to assign each one an H3 index at resolution 8, then reverse-geocode it against a polygon dataset (say, UK census output areas). Done sequentially in most languages, this is an I/O and CPU bottleneck at scale.

A naive Go loop is marginally faster than Python, but still leaves most of your CPU cores idle. The fix is to saturate your CPU with goroutines and use channels to coordinate the flow.

The Fan-Out / Fan-In Pattern

The pattern has three stages:

- Producer — reads input records and sends them to an input channel

- Workers — a pool of goroutines that read from the input channel and do the heavy lifting

- Collector — aggregates results from a results channel

package main

import (

"context"

"fmt"

"sync"

)

type GeoRecord struct {

ID int

Lat float64

Lng float64

}

type EnrichedRecord struct {

GeoRecord

H3Index string

OACode string

}

func producer(ctx context.Context, records []GeoRecord) <-chan GeoRecord {

out := make(chan GeoRecord, 100)

go func() {

defer close(out)

for _, r := range records {

select {

case <-ctx.Done():

return

case out <- r:

}

}

}()

return out

}The producer goroutine closes the channel when it finishes, which signals downstream workers to stop naturally. The select with ctx.Done() means the whole pipeline shuts down cleanly if you cancel the context — for example on a timeout or keyboard interrupt.

The Worker Pool

func worker(

ctx context.Context,

in <-chan GeoRecord,

out chan<- EnrichedRecord,

wg *sync.WaitGroup,

) {

defer wg.Done()

for {

select {

case <-ctx.Done():

return

case record, ok := <-in:

if !ok {

return // channel closed

}

enriched := process(ctx, record)

select {

case <-ctx.Done():

return

case out <- enriched:

}

}

}

}

func spawnWorkers(

ctx context.Context,

n int,

in <-chan GeoRecord,

out chan<- EnrichedRecord,

) *sync.WaitGroup {

var wg sync.WaitGroup

for i := 0; i < n; i++ {

wg.Add(1)

go worker(ctx, in, out, &wg)

}

return &wg

}The sync.WaitGroup lets the collector know when all workers are done, so it can close the results channel.

Wiring It Together

func RunPipeline(ctx context.Context, records []GeoRecord, numWorkers int) []EnrichedRecord {

in := producer(ctx, records)

results := make(chan EnrichedRecord, numWorkers*2)

wg := spawnWorkers(ctx, numWorkers, in, results)

// Close results channel once all workers are done

go func() {

wg.Wait()

close(results)

}()

// Collect results

var enriched []EnrichedRecord

for r := range results {

enriched = append(enriched, r)

}

return enriched

}This is idiomatic Go: the pipeline drives itself. No polling, no mutexes on the output slice (the collector is single-threaded by design), and clean shutdown via context cancellation.

The process Function: H3 Indexing

In a real pipeline, process might call a CGO binding to the H3 library, or use a pure-Go H3 implementation. Here is a simplified version:

import "github.com/uber/h3-go/v4"

func process(ctx context.Context, r GeoRecord) EnrichedRecord {

// Convert lat/lng to H3 cell at resolution 8

cell := h3.LatLngToCell(h3.LatLng{Lat: r.Lat, Lng: r.Lng}, 8)

return EnrichedRecord{

GeoRecord: r,

H3Index: cell.String(),

OACode: lookupOA(ctx, cell), // polygon-in-polygon lookup

}

}At resolution 8, each H3 cell is approximately 0.74 km². The cell.String() method returns the compact hex index (e.g. 8828308281fffff) that you can store in a database and index efficiently.

Benchmarking: How Many Workers?

On a 6-core machine, processing a dataset of 500,000 GPS points with varying worker counts:

| Workers | Time (s) | Throughput (records/s) |

|---|---|---|

| 1 | 18.4 | 27,174 |

| 4 | 5.2 | 96,154 |

| 8 | 2.9 | 172,414 |

| 16 | 2.8 | 178,571 |

| 32 | 3.1 | 161,290 |

The sweet spot here is roughly 2 × numCPU. Beyond that, goroutine scheduling overhead and memory pressure start to erode gains. The right value depends on whether process is CPU-bound (fewer workers) or I/O-bound (more workers can help).

import "runtime"

numWorkers := runtime.NumCPU() * 2Error Handling and Graceful Cancellation

One pattern often skipped in tutorial code: propagating errors back through the pipeline.

type Result struct {

Record EnrichedRecord

Err error

}

// Use context to cancel the entire pipeline on first error

ctx, cancel := context.WithCancel(context.Background())

defer cancel()

// If a worker encounters a fatal error, call cancel()

// All other goroutines will exit on the next ctx.Done() checkFor non-fatal errors (e.g. a single record fails to geocode), write the error into the Result struct and let the collector handle it. For fatal errors (database connection lost), cancel the context to drain and clean up the whole pipeline.

Why Go for Spatial Pipelines?

Go occupies an interesting niche for this kind of work. It is not as raw as C or Rust, but it is fast enough to process geo-data at scale without resorting to native extensions or multiprocessing hacks. The concurrency model — goroutines that cost around 2 KB of stack each, scheduled by the Go runtime rather than the OS — means you can run thousands of concurrent spatial operations without the overhead you would face with OS threads.

For spatial data engineering in particular, Go’s standard library complemented by packages like github.com/uber/h3-go and github.com/twpayne/go-geom provides a capable, type-safe foundation that integrates naturally with GRPC services, streaming APIs, and database drivers.

Next Steps

The pattern shown here scales well. Some directions to explore:

- Batched workers: rather than processing one record at a time, buffer records into batches before the H3 lookup to reduce function-call overhead

- Backpressure: use buffered channels of a fixed size; if the results channel is full, workers block, naturally throttling the producer

- Pipeline stages as packages: each stage (read, enrich, validate, write) as a separate function returning a channel — composable and testable in isolation

- Observability: wrap

processwith a metrics counter and emit per-worker throughput to Prometheus

Go’s concurrency model makes it easy to build these pipelines incrementally without refactoring everything when performance requirements change.